This question arises from a recent article in Datawrapper’s blog by Lisa Charlotte Rost – in this post she discusses why they don’t provide diverging stacked bars as a standard offering. I’ll offer the “tl;dr” option here that she gives: “We don’t recommend using diverging stacked bars for showing percentages. The 100% stacked bars are often the better option, especially when it’s important to compare the share of the outermost categories.”

This was really interesting to me – when visualising Likert scales (a mainstay of survey data) my go-to resource is Steve Wexler of Data Revelations – his white papers on visualising survey data have been a first point of reference from a visualisation and technical perspective. If I was to offer a “tl;dr” option for his preferred method of visualisation of visualising sets of Likert scale questions, it would be fair to say he is an advocate of diverging stacked bars. In aligning each bar to the question’s midpoint, the strength of opinion is visualised in the distance away from the midpoint of each bar.

(Incidentally, I’m not ashamed to admit that I’ve only just learned what “tl;dr” means, so it’s possible that some of you are like me until recently – you keep seeing it in places but never knew why! – it’s “too long; didn’t read”, hence a cue for a short precis of a much longer article for those who don’t want to spend time identifying it themselves).

It’s probably important to explain what a Likert scale is. First of all, it’s named after a person, so the first syllable is pronounced “Lick”, not “Like”. The rest is quite hard to explain – essentially a Likert scale is not a scale at all, but a list of responses which attempt to simulate a scale which is symmetrical and balanced a central point. Most typical would be something like:

- Strongly disagree

- Slightly disagree

- Neither agree nor disagree

- Slightly agree

- Strongly agree

Here’s a great gif from the Datawrapper site that shows the difference between the stacked bar types, including a third option where “neutrals” are separated to one side.

So this has prompted a certain amount of discussion. Daniel Zvinca gave a response in this article addressing the original Datawrapper blog. The upshot was a revised diverging stacked bar where neutral ratings, instead of in the middle, were placed on the outside of the diverging bars, with the positive and negative scores re-ordered so that the most positive scores are aligned to the centre, for ease of comparison.

Daniel was even kind enough to send me a message in explanation:

Divergent bars primarily display faceoff analysis, positives and negatives. Each of these are investigated in the strength order. Within each aspect, is no broken order. Taking out neutrals, we also break the order, yet is no issue because we consider it just another aspect.

— Daniel Zvinca (@danz_68) April 3, 2018

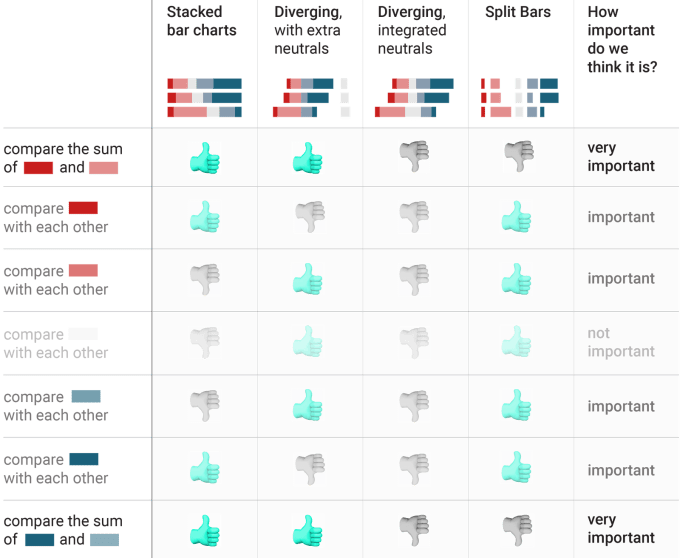

So, with the differing views doing the rounds, what did Steve Wexler make of all this? He has embraced Daniel’s suggestion – the image below is from his blog post on Rethinking Divergent Bars explaining the difference in ordering, as well as an instructive lesson on how to recreate them in Tableau. The right hand chart is the suggested alternative method for displaying divergent stacked bars.

And now we’ve heard from three industry experts with ridiculously more experience than me on the subject, what are my thoughts on this? I’m going to take a long and convoluted route to my answer, in doing so it might explain the context of my thinking …

When I started in data visualisation using Tableau about three years ago, I was told by various people that survey data was “hard”, “tricky” or “not possible”. Thankfully with my background in survey data I persisted, and was recommended the above mentioned Data Revelations resource and white paper as an explanation. As someone used only to wide data exported from survey software applications (one very wide record per respondent, with no exceptions) the concept of reshaping data into a “long” format was alien to me. But with the help of add-ons to Excel, increased functionality built into Tableau, and the expertise of Emma Whyte from the Information Lab who kindly talked me through the methodology, I started to understand.

Prior to this, working in Market Research for 20 years, programming surveys and processing data, I have dealt with many, many Likert scales, but with no thought given to their presentation and analysis (at least, not by myself). The traditional method of presenting data was to produce large decks of tables where every question would be cross-analysed by possibly twenty or thirty different dimensions. So, each scale would have every individual category displayed as an absolute and/or a percentage. Usually each question would then be presented as an overall value based on a sliding scale, with corresponding questions sorted in order of the overall scale. Often there would be more than one version – some with “don’t know” and equivalent. excluded from results and calculations, some without.

Charges for paper (and, no doubt, printer maintenance) would be huge as required output would be several hundred pages of A4 paper consisting purely of text and numbers, then couriered off to clients. As time progressed, the height of technology would be to convert these tables into Excel and process/deliver the information that way. But always everything by everything. Sometimes two versions of everything by everything.

My first job outside of Market Research, working in a social research company which created its own visualisations, would occasionally visualise survey data for its clients, and so I was able to carry on with this using the process already in place. It was very clear, from the colour schemes, methodology and computations in place, that at the new employer, we followed Steve Wexler’s Data Revelations method to the letter. We produced diverging stacked bars for clients, and clients loved them! I loved producing them, they looked cool and I understood the reasoning behind displaying them in such a manner.

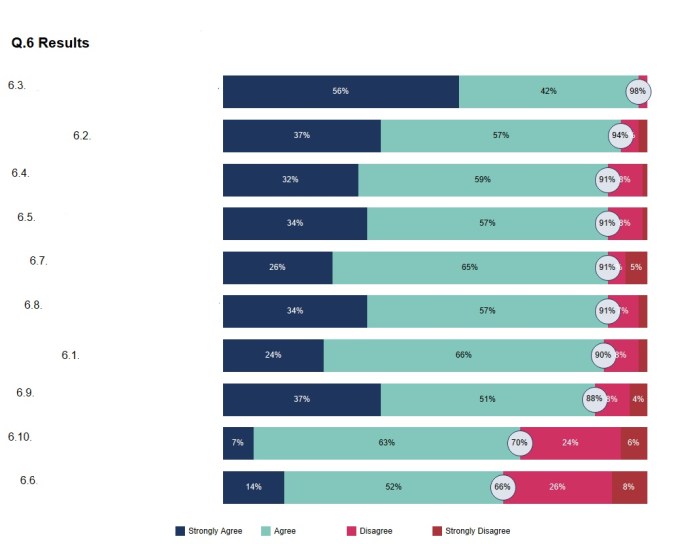

So – I feel I have a long background in survey data, albeit not a very long one in visualising survey data. When, a few months ago, our internal staff survey came about, I volunteered to visualise the results (with strictly anonymised data, of course). A first real opportunity to reshape, analyse and present survey data rather than just process it into tables. To my surprise, our HR Director was fine with this. I made sure I could load up and reshape the data, put it to one side, and a few days later checked back to find out when they’d like the data. It turns out there was a management meeting in less than an hour … so, quickly and instinctively, I visualised the data and showed several charts, all looking a little bit like this:

(Excuse the rubbishly-aligned question numbers, I’ve hastily redacted the question text for complete anonymity).

I should add that the feedback was really positive – it far exceeded the bar charts pasted from Excel that would have been produced instead (and have been in previous years) and the fast turnaround was much appreciated, it was great to receive thanks for something new and spontaneous.

But … given a clean slate, and despite my liking for diverging stacked bars, my go-to chart had been a 100% stacked bar, after all that!

Why did I go for a 100% stacked bar? Certainly three reasons come to mind

- Space. 100% stacked bars fill the screen better, but also do so consistently. You can see that this represented agreement scores for a battery of Q6 questions, but I also presented Q10 questions. If they are more spread out than Q6, then either 100% will be represented by different sizes (if justified to fill a whole slide/screen), or 100% would need to be represented by a much smaller bar length to be consistent.

- No precedent – this data hasn’t been presented in any non-standard way before. Either method would have been considered a visual improvement with “wow” factor above Excel standard outputs

- Ease – I admit it, this is much easier to produce in Tableau than diverging bars, requiring a lot more calculation and manipulation, and would have been a risky option for my rapidly-approaching deadline.

- In choosing to highlight the number of overall “top two” responses in the midpoint of each bar, the mid-points, so easy to see because of their alignment in a diverging stacked bar, are also easy to see because of their positioning between red and blue(ish) colours.

So, my thoughts on the arguments of the pros and cons of diverging stacked bars come from the point of view of an initial preference for diverging bars as my first introduction to displaying survey data, but having recently displayed 100% stacked bars as a method of choice.

I want to really like the new revised diverging stacked bar method, because in aligning the most positive and negative categories to the middle, comparisons are easiest, successfully countering the key criticism against the diverging bar levelled by Datawrapper. However there are a few reasons I’m not comfortable with this version:

Firstly, from the background of writing, programming and presenting (as text) so many Likert scales in survey data, I can’t get away from them as an ordered scale. There might be a neutral category but it is still a point on a scale.

Suppose your scale is a five point scale: “Strongly agree, slightly agree, neither agree nor disagree, slightly disagree, strongly disagree”. What do those words mean? Surely you either agree with something or you disagree? Perhaps the scale might have the simpler “Agree” or “Disagree” instead of their “slightly equivalents. This is also often seen, eliminating the pedantic question of how you can slightly, or somewhat agree with something. But surely if you strongly agree with something you also agree with it? There’s an argument to say that you can’t go stronger than agreeing with something …

My point is that the words are guides, linguistically designed, to get you to score a strength of opinion on a statement from 1 to 5, and it’s analysis on those numbers 1-5 that are just as likely to be used in order to determine which statements have the highest levels of agreement.

The alternative “neutral” is “don’t know” (or “not applicable”, or “not answered”), or perhaps a variant on one of these. It may make sense to exclude these but they remain valid answers. Sometimes it may be survey design which means we want to exclude these people, but sometimes it may be an indicator that the question is too difficult, or uninteresting, to elicit a higher positive or negative response rate.

So I’m OK with excluding neutral or non-committal answers if it’s explained to me why, or if only positive/negative responses are relevant. I do much prefer that to the alternative of seeing them moved off to one side in a colour that’s difficult to see. I feel like I’m being manipulated (I’m both a mathematician and an old hand at surveys, so I understand why as well as anyone, but I still don’t like it!). And I would rather see all my data in one place. If the data is not important enough to show in the main arena, then I don’t want to have to see it in a side chamber of lesser importance.

We’re used to the exclusion of neutral data as consumers – this frame from the 70s is still probably well known by most UK householders of a certain age (there’s even a game show derived from the slogan), though do we remember that it’s not “8 out of 10 cats” but “8 out of 10 owners who expressed a preference”?

So the point of this is I’d rather see neutrals shown, but if they’re not considered important, then I’d rather see them excluded completely and calculations rebased if it makes sense not to focus on them. But if we want neutral opinions, leave them in, don’t split them up, and put them in the middle! The “strongest central” method of displaying diverging stacked bars asks me to change the order of my scale from 1,2,3,4,5 to (half of) 3,4,5,1,2, (other half of) 3, which feels a little too arbitrary to me and draws my attention away from the key analysis.

All the above is a great justification of a scale with an even number of options. No pesky mid-scale neutrals! It’s possible that I would find this chart type easier to comprehend if we were simply changing 1,2,3,4 to 2,1,3,4. It would make comparisons of the most polar opinions easier, but still not take away from the fact that we are changing the order. This to me would be better as a simple two-way bar chart with the most positive and most negative bars the only ones included (in opposite direction from the central axis), not unlike a population pyramid where male and female are the only options. If the most positive and negative response are the ones that are most key to be compared, let’s just show those.

My main thought process to include in the argument for either stacked bar case is that it’s rare that comparison of most positive responses (whether the top response, or combined “top two” response) is the most important take-out. One thing that I think has been missed from most arguments is that of sorting and ranking. A search of all three of the articles shows that sorting and ranking are barely mentioned in any of them. It’s taken for granted that sorting is a good thing to do, in fact Lisa’s Datawrapper blog is the only one of the three to mention sorting or ranking, stating “We prefer the original version by The Pudding. Not just because it’s sorted” The implication is that sorting is good practice. I’d go further than that, and say that sorted, or ranked, order is the most important when comparing responses and visualising a set of Likert questions.

Take my staff survey visualisation. The key thing that the HR department or Directors want to know is which statement(s) performed best, and which performed worst. Now we can quantify that, typically, in three ways

- Percent strongly agree (or more generically, percent most positive)

- Percent strongly or slightly agree (or, percent “top two”)

- Average score (usually scored 5 down to 1 on a five point scale).

These could give slightly different ranking orders, depending on the preference of how you analyse it (the first option, for example, will have more of a factor when there are strongly polarising opinions). But, having made the choice, we rank each statement. So, we know that the team don’t have to worry too much about the factors behind statements 6.3 and 6.2, but do need to worry about the factors behind 6.6 and 6.10 where scores are worse. I believe that’s the key issue at stake in comparing questions/statements. 100% stacked, or diverging, it will be obvious to us in which direction the bars are stacked (“good to bad” or “bad to good”) so to me that makes the necessary skill of comparing between adjacent bars less important.

This also bears out my comment above – the quantification of Likert scores (especially the third bulleted option where I talk about calculating average “scores” as so prevalent in Market Research) is arbitrary given that the survey or questionnaire generating these scores will be using words, not numbers. Differences in language and culture mean that wording for scales will be different. I’m not just talking about translation here, for example market research industry standard is to use “slightly” agree in the UK and “somewhat” agree in the US even though both use English. Does this lead to different results? The careful use of the culturally correct word would suggest that the differences are reduced in order to design the Likert question in as balanced a way as possible, but the very fact that different words are needed would suggest that differences in understanding are there. Many cultures are far more likely to strongly agree or rate higher, for fear of offending the interviewer. And that’s been found even when street interviews are replaced by online surveys! Because we’re using carefully chosen words and phrases to garner an opinion on an approximate numerical scale, it feels more valid to compare by rank but more arbitrary to quantify how much more a sample agrees with statement one over statement two.

What it boils down to is that the Datawrapper blog diligently considers the most important factors in comparing each bar to justify its preference for stacked bar charts and then argues in a manner that is difficult to dispute. In doing so it very fairly leaves open other opinions based on whether an alternative user might consider the importances to be different, and that’s the basis on which you might prefer other types of stacked or split bars.

My own thought is that positioning and ranking is potentially more important than comparison of value from bar to bar, meaning that considerations such as aesthetics, layout, ease of understanding and ease of creation come more into play. And therein lies the dreaded “it depends”.

Considering only layout:

- Stacked bars use a full compact rectangular shape, make labelling on bars most accessible and allow for consistent repetition and easy slide visibility.

- Diverging bars show more contrast, leave more white space for annotations and may give a cleaner, crisper look if annotations are sparingly used.

So …

TL;DR. (I know, this is supposed to go at the top, right?) They’re both good. It depends

Postscript: -the Wikipedia entry for Likert Scales has the following very small paragraph

Visual presentation of Likert-type data

An important part of data analysis and presentation is the visualization (or plotting) of data. The subject of plotting Likert (and other) rating data is discussed at length in a paper by Robbins and Heiberger. They recommend the use of what they call diverging stacked bar charts.

Who fancies an edit?!